Development and Evaluation of AI Systems in Healthcare: A CNIL Compliance Framework and HAS-CNIL Operational Guide

The French National Commission on Informatics and Liberty (CNIL), in collaboration with the High Authority for Health (HAS), has released a comprehensive regulatory framework and operational guide aimed at securing the development, evaluation, and deployment of Artificial Intelligence (AI) within the healthcare sector. This dual publication serves as a critical expansion of the CNIL’s general recommendations on AI, tailoring them to the high-stakes environment of medical data and patient care. The move comes as the European Union prepares for the phased implementation of the AI Act (RIA), signaling a proactive effort by French regulators to harmonize domestic practices with upcoming continental standards.

The new guidelines are specifically designed for Data Protection Officers (DPOs), project managers, and healthcare stakeholders—including hospital administrations and technology developers—who utilize health data to build or validate AI systems. By providing a structured compliance roadmap, the authorities aim to balance the rapid pace of technological innovation with the fundamental necessity of protecting sensitive personal data and ensuring clinical safety.

A Three-Stage Lifecycle for AI in Healthcare

The CNIL framework identifies three distinct phases in the lifecycle of a healthcare AI system (SIA), emphasizing that while some steps are optional, each carries specific legal obligations under the General Data Protection Regulation (GDPR) and the French Data Protection Act.

1. The Establishment of a Health Data Warehouse (EDS)

The first stage, which is optional, involves the creation of a Health Data Warehouse (Entrepôt de Données de Santé or EDS). This stage requires organizations to anticipate future data uses, which may include the development of various AI systems. The CNIL clarifies that the development of AI for healthcare is legally assimilated into "research, study, or evaluation in the field of health." This doctrine aligns with the GDPR’s broad interpretation of "scientific research," which encompasses technological development and demonstration. Crucially, any processing of data extracted from these warehouses constitutes a separate data processing activity, which must comply with the specific requirements of the subsequent development stages.

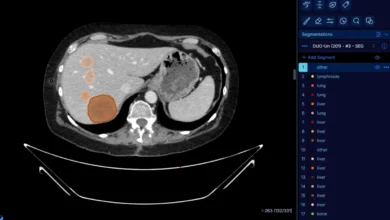

2. The Creation of Dedicated Training Databases

The second stage involves building a database specifically dedicated to the development of a particular AI system. This includes the preparation of data, the training and optimization of the model, and the validation of both technical and clinical performance. The CNIL adopts a pragmatic stance here, stating that these various actions can be grouped under a general purpose: "the development of an AI system." For systems intended to become Medical Devices (MDs), this purpose covers everything from initial training to the point where the developer obtains the necessary market authorizations or CE marking.

3. Post-Deployment Evaluation

The third stage focuses on evaluating the system’s impact once it is deployed in a real-world clinical setting. This evaluation examines how the AI affects care pathways, professional practices, and epidemiological surveillance. Because this stage involves measuring real-world outcomes using patient data, it is classified as a distinct research activity, subject to specific prior formalities and ethical reviews.

Operational Recommendations for AI Deployers: The HAS-CNIL Guide

Complementing the CNIL’s legal framework is a joint guide developed with the HAS, focusing on "deployers." Under the European AI Act, a deployer is defined as any natural or legal person using an AI system under their own authority in a professional context, such as a hospital or a private clinic. The guide adopts a chronological approach, tracing the responsibilities of a healthcare facility from the initial decision to acquire an AI tool to its eventual decommissioning.

Procurement and Contractual Vigilance

The guide outlines several "points of vigilance" that healthcare providers must address during the acquisition phase. Before signing any contract, deployers must demand clear and comprehensive information regarding:

- The intended use and clinical destination of the AI.

- The conditions under which the model was developed and the populations used for training.

- Known performance limits, potential biases, and eligible patient profiles.

- Human oversight mechanisms and procedures for handling malfunctions.

- Environmental impacts and data flow diagrams.

Regulatory compliance is a non-negotiable prerequisite. This includes verifying the AI’s status as a Medical Device, its GDPR compliance, and ensuring that any health data is stored by a certified Health Data Hosting (HDS) provider. Furthermore, the guide emphasizes the need for interoperability with existing Hospital Information Systems (HIS) to prevent data silos.

Formalizing Responsibilities (RACI and SLAs)

During the contractual phase, the HAS and CNIL recommend the use of a RACI matrix (Responsible, Accountable, Consulted, and Informed) to clearly delineate duties between the AI supplier and the healthcare provider. Contracts should include robust Service Level Agreements (SLAs), provisions for a Proof of Concept (POC) phase, and clearly defined performance thresholds. The DPO must be central to these negotiations to ensure that data reuse, sovereignty, and storage within the European Economic Area (EEA) are legally protected.

Training, Ethics, and the Human Element

Recognizing that technology is only as safe as its users, the guidelines propose a tiered training strategy. General awareness training is recommended for all healthcare professionals, while in-depth technical training is reserved for key roles like the DPO or the Chief Information Officer (CIO). Crucially, the guide suggests that specific training should be mandatory for any professional before they are permitted to use an AI system in a clinical setting.

To formalize these ethical commitments, healthcare institutions are encouraged to draft an "AI Charter." This document should outline prohibited practices—such as using unauthorized consumer-grade AI for clinical diagnosis—and provide a "white list" of approved use cases that present minimal risk to patient safety.

Addressing the Rise of Generative AI

The guide specifically addresses the recent surge in Generative AI (GenAI), such as Large Language Models (LLMs). While acknowledging their potential for administrative tasks or medical summarization, the HAS and CNIL advocate for "reasoned use." Healthcare providers are urged to strictly control these tools, sensitizing staff to the risks of "hallucinations" (factually incorrect outputs) and the dangers of inputting sensitive patient data into public, non-secured AI interfaces.

Timeline and the European AI Act (RIA) Context

These French initiatives are timed to prepare the domestic market for the European AI Act, which entered into force recently and will see staggered application. The regulatory timeline is as follows:

- August 2, 2026: Most obligations under the AI Act become applicable, particularly for "high-risk" AI systems listed in Annex III (such as those used in employment or essential public services).

- August 2, 2027: Obligations for high-risk systems that fall under Annex I—which includes most AI-driven Medical Devices—will become mandatory.

- Ongoing: Potential adjustments via the "Omnibus on AI" regulation may refine these dates, but the trajectory toward strict oversight is clear.

Institutional Strategy and Broader Implications

The release of these documents is a cornerstone of the CNIL’s strategic plan for 2025-2028. The authority has identified AI as one of its four priority axes, pledging to audit and control AI systems to protect individuals under both GDPR and the AI Act. Simultaneously, the HAS has integrated the management of digital and AI-related risks into its sixth certification cycle for healthcare establishments, which began in 2024. This means that a hospital’s ability to safely manage AI will now directly influence its official accreditation status.

The implications of this framework are profound for the healthcare industry. For developers, it provides a "compliance by design" blueprint that reduces legal uncertainty. For healthcare providers, it offers a shield against liability and a method for ensuring that technological adoption does not compromise patient trust. For patients, it represents a commitment to fundamental rights, ensuring that their most intimate data is not exploited and that AI-assisted decisions are transparent and supervised by human professionals.

As France positions itself as a leader in "AI for Good," the collaboration between the CNIL and HAS sets a high bar for regulatory clarity. By treating AI development as a form of scientific research and deployment as a rigorous clinical process, the French authorities are attempting to foster an ecosystem where innovation and safety are not mutually exclusive, but rather mutually reinforcing. The success of this framework will likely serve as a model for other EU member states as they navigate the complex transition into a regulated AI era.